Simulated human eye movement aims to form metaverse platforms

Obtaining data on how people’s eyes move while watching TV or reading books is an arduous process, one that metaverse app developers can now skip thanks to Duke Engineering’s “virtual eyes.” Credit: Maria Gorlatova, Duke University

Computer engineers at Duke University have developed virtual eyes that simulate the way humans look at the world with enough precision that companies can train virtual reality and augmented reality programs. Called EyeSyn for short, the program will help developers build apps for the rapidly expanding metaverse while protecting user data.

The results have been accepted and will be presented at the International Conference on Information Processing in Sensor Networks (IPSN), May 4-6, 2022, a premier annual forum for sensing and control research in network.

“If you want to detect if a person is reading a comic or advanced literature by just looking at their eyes, you can do that,” said Maria Gorlatova, Nortel Networks assistant professor of electrical and computer engineering at Duke.

“But training this kind of algorithm requires data from hundreds of people wearing headsets for hours at a time,” Gorlatova added. “We wanted to develop software that not only reduces the privacy concerns of collecting this kind of data, but also allows small businesses that don’t have these levels of resources to enter the metaverse game.”

The poetic insight describing the eyes as windows to the soul has been repeated since at least biblical times for good reason: the little movements of how our eyes move and our pupils dilate provide a surprising amount of information. Human eyes can reveal if we are bored or excited, where concentration is focused, if we are experts or novices at a given task, or even if we are fluent in a specific language.

“Where you prioritize your vision also says a lot about you as a person,” Gorlatova said. “It can inadvertently reveal gender and racial biases, interests we don’t want others to know about, and information we may not even know about ourselves.”

Eye movement data is invaluable to companies building platforms and software in the metaverse. For example, reading a user’s eyes allows developers to tailor content to engagement responses or lower the resolution of their peripheral vision to save computing power.

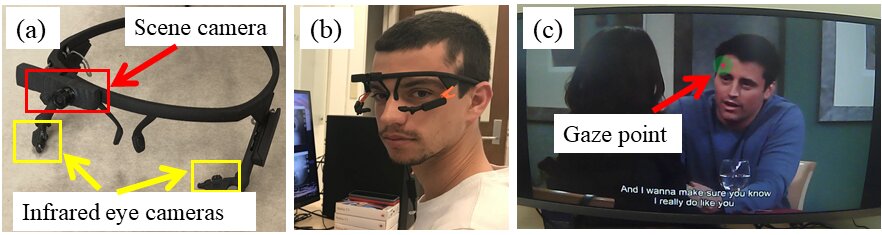

With this wide range of complexity, creating virtual eyes that mimic how an average human reacts to a wide variety of stimuli sounds like a daunting task. To climb the mountain, Gorlatova and her team, including former postdoctoral associate Guohao Lan, who is now an assistant professor at Delft University of Technology in the Netherlands, and currently has a doctorate. Student Tim Scargill delved into cognitive science literature that explores how humans view the world and process visual information.

For example, when a person watches someone speak, their eyes alternate between the person’s eyes, nose, and mouth for varying lengths of time. When developing EyeSyn, the researchers created a model that extracted where these features are on a speaker and programmed their virtual eyes to statistically emulate the time spent focusing on each region.

“If you give EyeSyn many different inputs and run it enough times, you will create a synthetic eye movement data set large enough to train a (machine learning) classifier for a new program,” said Gorlatova.

To test the accuracy of their synthetic eyes, the researchers turned to publicly available data. They first had their eyes “watch” videos of Dr. Anthony Fauci speaking to the media at press conferences and compared them to eye movement data from actual viewers. They also compared a virtual dataset of their synthetic eyes looking at the art with real datasets collected from people browsing a virtual art museum. The results showed that EyeSyn was able to closely match the distinct patterns of real gaze cues and simulate the different ways that different people’s eyes respond.

According to Gorlatova, this level of performance is good enough for companies to use as a benchmark for training new metaverse platforms and software. With a basic skill level, commercial software can then achieve even better results by customizing its algorithms after interacting with specific users.

“Synthetic data alone isn’t perfect, but it’s a good starting point,” Gorlatova said. “Small businesses can use it rather than spending time and money trying to create their own real-world datasets (with human subjects). And since the customization of algorithms can be done on systems people don’t have to worry about their private eye movement data being part of a big database.”

What is the Metaverse? A tech CEO helping build it explains

Study: maria.gorlatova.com/wp-content … 022/03/EyeSyn_CR.pdf

Conference: ipsn.acm.org/2022/

Provided by Duke University

Quote: Simulated human eye movement aims to form metaverse platforms (March 7, 2022) Retrieved March 7, 2022 from https://techxplore.com/news/2022-03-simulated-human-eye-movement- aims.html

This document is subject to copyright. Except for fair use for purposes of private study or research, no part may be reproduced without written permission. The content is provided for information only.

Comments are closed.